One thing to learn about electric power is as the voltage is increase, AC or DC, the amperage used will go down to accomplish the same work.

Lower voltage will increase the amperage needed to accomplish the same work

I don't remember the formula now for figuring out the amps needed with the existing voltage to provide for the watts used to do the work

Amps do the work, not Volts

You have to be careful with that analogy on centrifugal devices like pumps. They are governed by affinity laws.

Affinity laws state that the flow is the square of the speed and the hp required is the cube of the flow. In a DC motor, speed is proportional to voltage. So drop the voltage the hp drops a lot so amps actually go down. Also a motor is a torque matching device so it does not follow ohm's law.

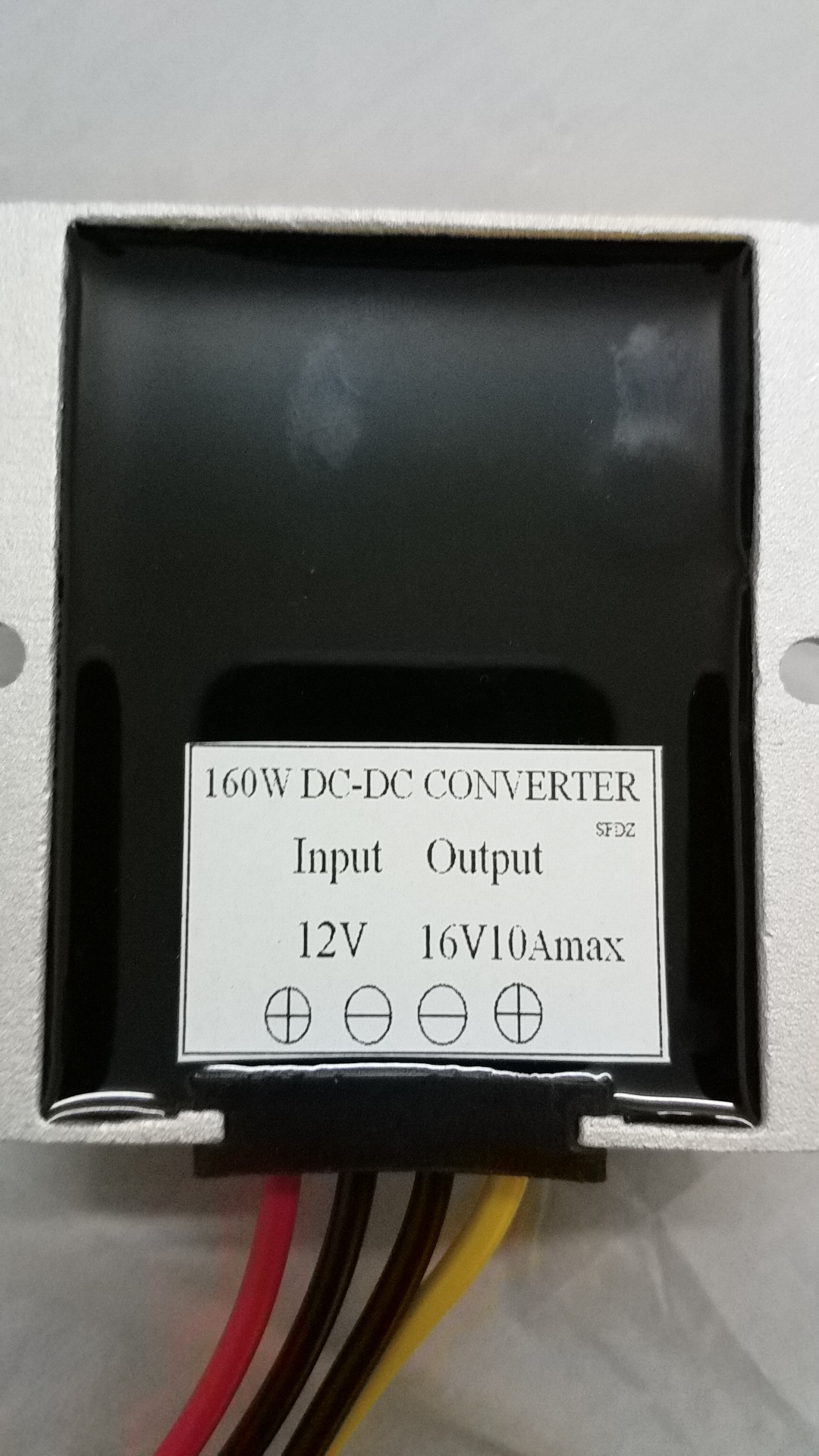

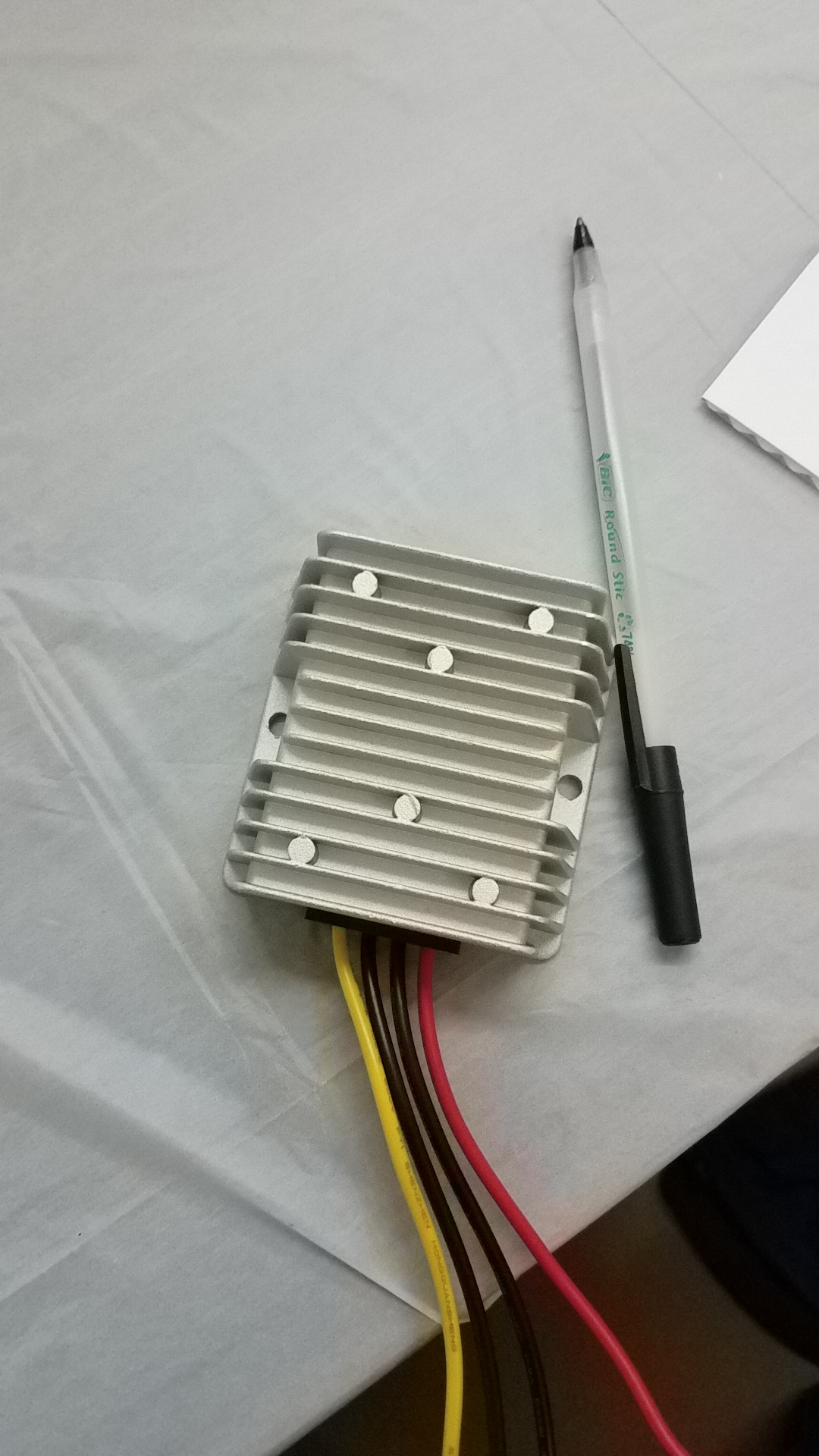

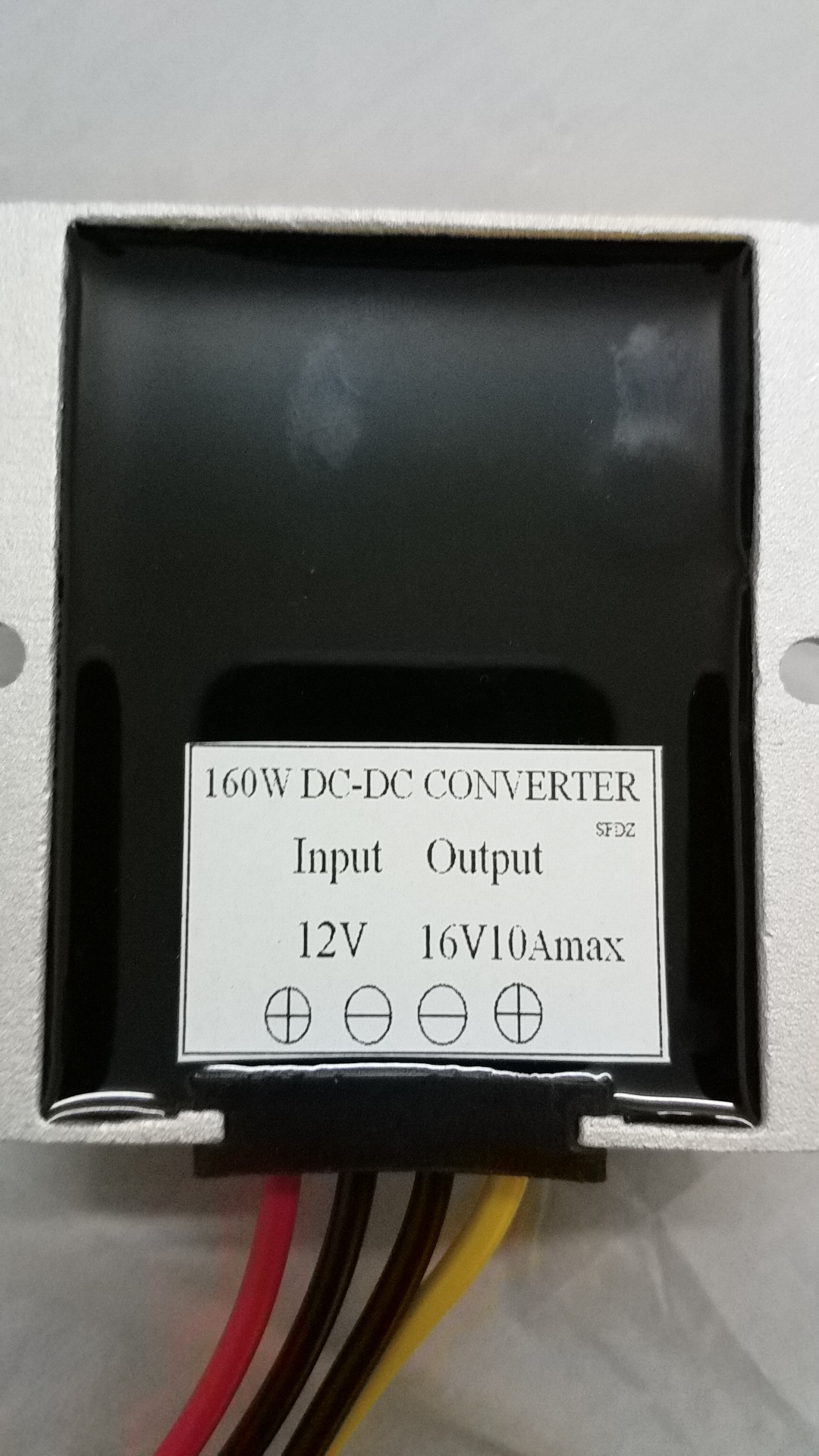

Ignoring losses, assume a water or fuel pump draws 10 amps @ 16 volts. That equals to 160 watts.

Drop the voltage to 12 volts, voltage drops 25%, the flow drops to 56.25% of previous flow and horsepower drops 42.19% of previous hp (67.5 watts). So 67.5/12 = 5.62 amps.

So in this case when the voltage drops from 16 to 12 volts the amps actually drop from 10 to 5.62 amps and you have 56.25% of the original flow. Pretty sure my math is right. Results would not be exact due to losses and some other considerations, but it won't be that far off.

It also works the other direction. When I went from a 50 to a 120 amp alternator, my running voltage went from around 11.8 to just under 14 volts. I dropped over 20 degrees returning to the pits after a run because of these laws. My amp requirement went up a lot!